Introduction

A couple of months ago I’ve described how to transfer data from Apache Spark to PostgreSQL by creating a Spark ForeachWriter in Scala.

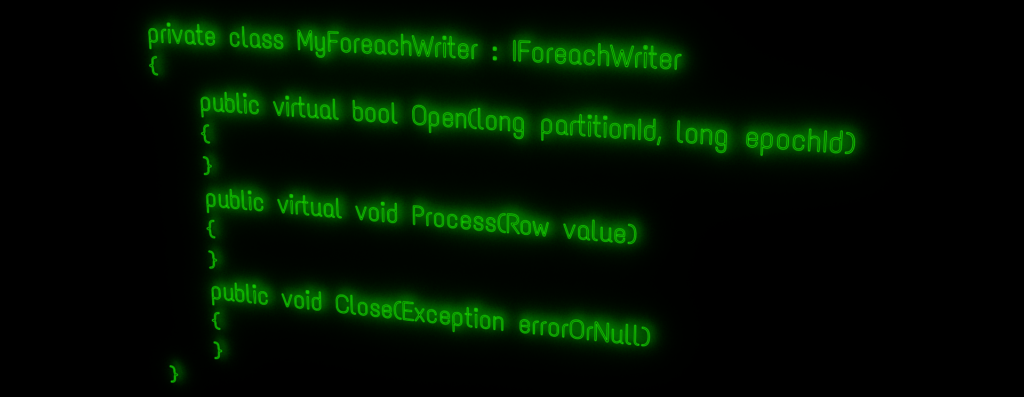

This time I will show how this can be done in C#, by creating a ForeachWriter for .NET for Apache Spark.

To create a custom ForeachWriter, one needs to provide an implementation of the IForeachWriter interface, which is supported from version 0.9.0 onward. I am going to use version 0.10.0 in this article, however.

Documentation of the C# Interface is provided within the related source code:

https://github.com/dotnet/spark/blob/master/src/csharp/Microsoft.Spark/Sql/ForeachWriter.cs

The example project I am … more