With the multitude of existing projects and solutions related to real-time data processing out there, it can be very easy to get lost in all the available options.

That is why I have started this blog series. I want to showcase an example pipeline that covers the topic of real-time data processing, from beginning (data generation) to the end (data presentation).

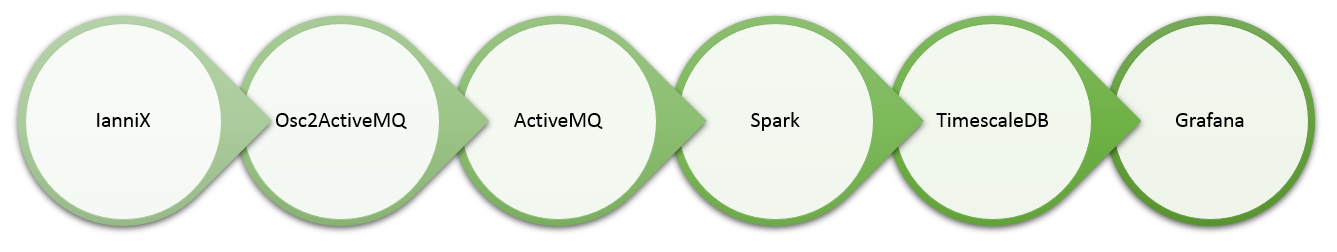

Below is an overview of the pipeline that I am going to use.

Here are the links to the related articles.

- Part 1 – Visual time series data generation

- Part 2 – OSC 2 ActiveMQ

- Part 3 - ActiveMQ, Spark & Bahir

- Part 4 – Data transformation

- Part 5 – Spark to PostgreSQL

- Part 6 – PostgreSQL & Grafana

Let’s take a closer look at the different components.

IanniX

Typically, a predefined set of data is used to play around with one or more of the processing pipeline components.

Let’s do something different this time. I will use a tool that can visually create / simulate real-time data. In this way, I can easily compare the original data with the transformed data at the end of the pipeline. This will simplify the validation of the entire transformation and analysis process.

Knowing IanniX from a completely different domain, I think it will be fun to use for real-time simulation of IoT data. Therefore, this series starts with exploring the usage of IanniX for exactly that purpose.

Osc2ActiveMQ

IanniX does support different protocols to transfer the generated data to another system. I have decided to use the Open Sound Control protocol, since it provides some nice features that make it particularly useful for this real-time data processing pipeline.

Bespoke.Osc is a .NET implementation of the OSC specification and also available as .NET Standard library.

NMS is the .NET Messaging API, which is one of the components provided by the Apache ActiveMQ project.

In this part of the series, I’ll introduce a docker image that will combine Bespoke.Osc with NMS, to publish the generated data to ActiveMQ for further distribution.

ActiveMQ

As stated on the website, ActiveMQ supports a variety of Cross Language Clients and Protocols. One of them is MQTT, which is considered as the de facto standard protocol for the Internet of Things. So, if you have any existing IoT devices that you want to use for testing the remaining bits of the pipeline, you have the freedom to plug them in as well.

Apache Spark

Not sure yet, how deep I will dive into Spark for streaming, but I am quite certain that it will require multiple articles to provide some basic overview about real-time processing with Spark.

It seems to make sense to start with an initial article about getting the data from ActiveMQ into Spark and I most likely will use Apache Bahir for that. Next, I’ll probably explore some basic real-time transformation and how to persist the data in a database.

TimescaleDB

TimescaleDB is an extremely promising extension to the popular open-source PostgreSQL database. It is specifically optimized for handling time series data, which makes it a perfect candidate for storing the kind of real-time data that we are dealing with.

Grafana

Last but not least, I plan to use Grafana to visualize the data at the end of the pipeline. Grafana is very suitable for time series analytics and also supports a lot of different data sources.

Epilogue

Now, that you know what this blog series is about, I hope that you will find it useful. If you have any comments or suggestions, please feel free to get in touch via the contact form.

So long and have a great time!

16. June 2019

[…] is the first part of my series to showcase a potential pipeline for real-time data processing. An overview about the different components that I am going to use can be found […]

21. June 2019

[…] back to the second part of my series, showcasing a real-time data processing pipeline!In part 1, I explored visual real-time sensor data simulation, as the entry point into our […]

10. August 2019

[…] you followed my small series about a real-time data processing pipeline, you probably remember that I have used Apache Bahir to retrieve streaming data from Apache […]

4. February 2020

[…] some of my previous posts, like the series about a real-time data processing pipeline for example, I have used some generated time series sensor data for testing. As you will learn, HTM […]