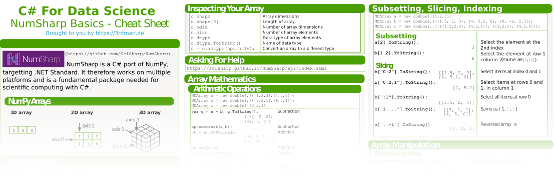

Recently, I published a small example project to utilize the htm.core AI algorithm by consuming its REST API via C#. As the API also transfers serialized multi-dimensional NumPy arrays, I was looking for an easy way to get them back into C# objects. I’ve tried out a couple of approaches and finally decided on using the NumSharp library, as I wanted a solution that works on multiple platforms.

I find DataCamps’ Data Science Cheat Sheets very useful and was hoping to find something similar for NumSharp. Well, I didn’t, but obviously that gave me a … more