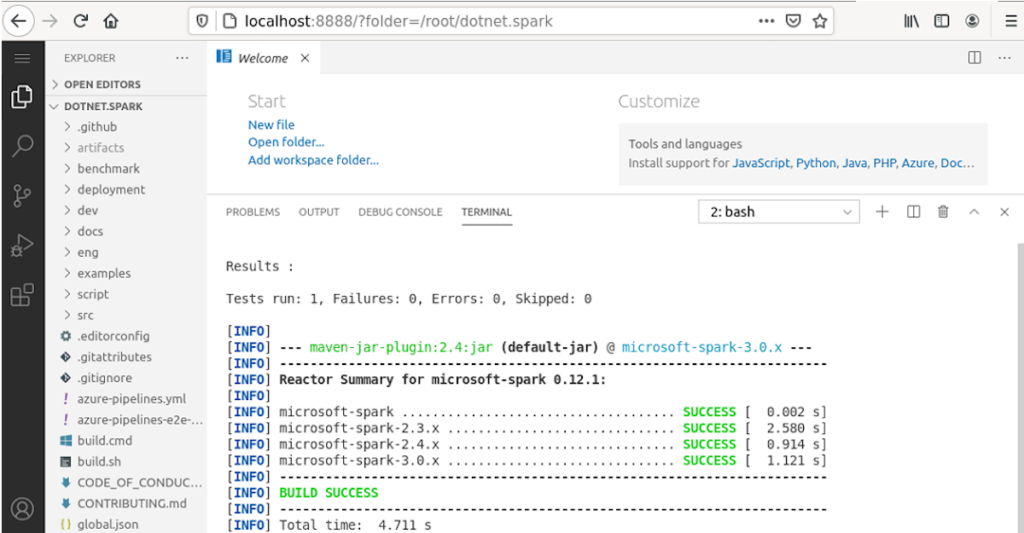

My last article explained how you can use .NET for Apache Spark together with Entity Framework to stream data to an SQL Server. There is one caveat though. You have to build Microsoft.Spark.Worker yourself.

This time I’ll show you how you can actually build .NET for Apache Spark with VS Code in a browser yourself, including building and running the C# examples.

Setting up your own development environment to build and test .NET for Apache Spark can be tricky and time-consuming. However, as a regular reader, you are probably aware that I like to use docker … more