.NET for Apache Spark 0.4.0 was released recently. Therefore, it is now time to test, if it can be used for MQTT Streaming as well.

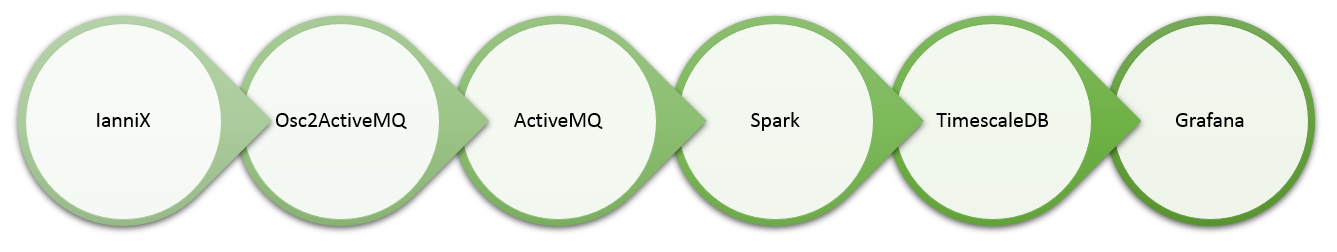

If you followed my series about a real-time data processing pipeline, you probably remember that I have used Apache Bahir to retrieve streaming data from Apache ActiveMQ via the MQTT protocol. The data itself was generated by IanniX and forwarded to ActiveMQ utilizing my osc2activemq docker image.

Preparing .NET for Apache Spark for MQTT Streaming

For the most parts, you can follow this quick intro tutorial, which walks … more