You do want to test and debug your .NET for Apache Spark application with Visual Studio? But you don’t want to set up Apache Spark yourself?

Then read along and find out how my docker image might be able to help.

Before we dig into the details however, I specifically want to thank Devin Martin for sharing his idea about such a docker image with me!

Background

As you might be aware, you can debug your .NET for Apache Spark application directly in Visual Studio by starting the related DotnetRunner in Debug mode.

Obviously that means that you first need set up Apache Spark and its dependencies on your local machine.

However, if you are using docker, you could skip this potentially time-consuming process and use the docker image instead.

Test Environment

I have tested this on a Windows 10 system running Docker Desktop with Linux containers.

Here is an overview of the test environment components that I am using:

- Windows 10 (1903)

- Visual Studio 2019 Community Edition (16.3.1)

- Visual Studio Code (1.39.1)

- Docker Desktop for Windows (19.03.2)

Furthermore, I am also using the following extensions.

For Visual Studio 2019:

And for Visual Studio Code

Preparation

I am going to use the HelloSpark example project again, that I’ve already briefly described in this post.

For the docker image to work with this example, there is some preparation work required.

Build the project

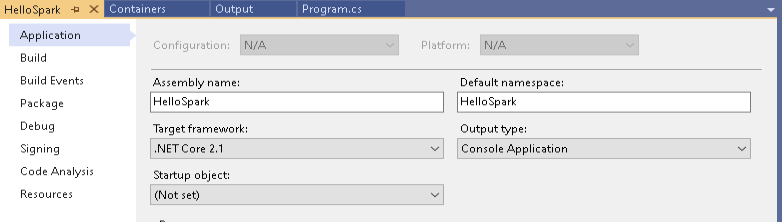

First, the dotnet core target framework version needs to match the one of the docker image. 3rdman/dotnet-spark:0.5.0-linux-dev is using version 2.1 and therefore the TargetFramework element in HelloSpark.csproj needs to be set to netcoreapp2.1.

<TargetFramework>netcoreapp2.1</TargetFramework>

If you are using Visual Studio 2019, then you can achieve the same by setting the target framework in the project properties, as shown below.

Before you can actually use the debugger with the image the first time, you need to build it first. This step is necessary, to create the bin/Debug folder with all the required files.

As you will see later, we will mount this Debug folder into our docker container later, so that it has access to these files as well.

Without these files available, debugging will not work.

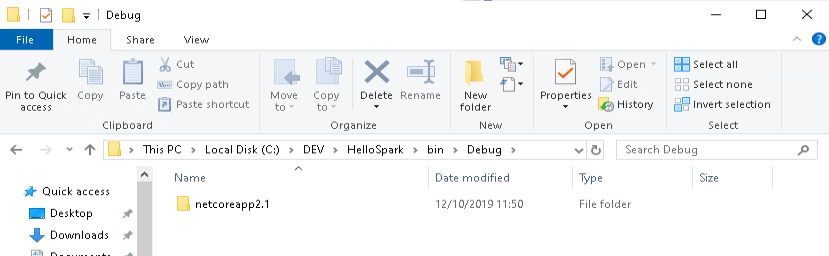

On my test system, the project folder is C:\DEV\HelloSpark and once I’ve built the solution the first time, I can change into the bin\Debug sub-folder. The full path to that folder is what we will need to map as a docker volume later. In my case this is C:\DEV\HelloSpark\bin\Debug

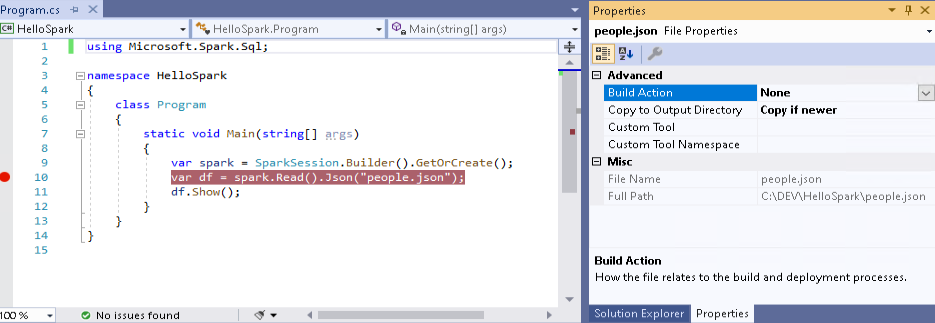

HelloSpark doesn’t do much. It reads data from a file named people.json and displays its content. Initially, this file is only located in the root directory of the project, and obviously needs to be made available to our docker image as well.

To do that, you need to set the “Copy to Output Directory”-property to “Copy if newer”

Starting the container

Now I can launch the container using the following docker command:

docker run -d --name dotnet-spark -p 5567:5567 -v "C:\DEV\HelloSpark\bin\Debug:/dotnet/Debug" 3rdman/dotnet-spark:0.5.0-linux-dev

As you can see, C:\DEV\HelloSpark\bin\Debug is now mapped to the /dotnet/Debug folder within the docker image, which is used by the containers spark process.

You probably also noticed the mapping for port 5567. This is the default port of the DotnetBackend for DotnetRunner debugging.

For more details on the container configuration options, check out the related docker hub page, and have a look at this blog post.

Debugging

Now that we have the docker container up and running, we can start debugging our .NET for Apache Spark HelloSpark project by pressing F5.

As you can see from the recording above, it works as expected and using the Visual Studio Container Tools Extension, we can directly inspect the related DotnetRunner output as well. Very nice!

Let’s see how debugging works with Visual Studio Code now.

Works as well. Excellent!

Now that you have an easy way to debug a .NET for Apache Spark application, without the need to set up Apache Spark yourself, there are no more excuses to not play around with it (as long as you are using docker of course) 🙂

18. October 2019

[…] are looking for a way to debug your .NET for Apache Spark project, then you might be interested in this post as […]